Nvidia’s DLSS 5 is the most ambitious use of AI the company has ever released. On paper, it should help developers achieve realistic graphics without all the hassle. In practice, the results presented by Nvidia made people cringe and point out the “AI slop” looks.

Presented characters have taken the most heat: their faces were waxy, overly smooth, with overpowered lighting that made them even more artificial rather than authentic.

Nvidia claims it’s up to developers to decide on the style and overall look to go with, yet considering the examples provided, the technology is going to have a very hard time winning people over.

TL;DR – DLSS 5 Controversy

- DLSS 5 focus: AI-enhanced visuals instead of just performance

- Main backlash: “AI slop” faces, bad lighting, and lost artistic detail

- Big concern: AI may overwrite artists’ original intent

- Nvidia’s claim: Developers control the final look

- Big question: Is this the future of graphics, or a step too far?

DLSS 5: Nvidia Wants More AI in Your Games

For most of its life, DLSS was a feature used to primarily improve performance in games. It used AI (or, more specifically, machine learning) for real-time upscaling and frame generation to help you generate more frames per second without sacrificing the looks.

DLSS 5 takes a drastically different approach. Instead of focusing on performance, Nvidia wants to use AI to improve the looks. The idea makes sense on paper: why use a lot of power to render photorealistic graphics and then use AI to generate “fake” frames, when you can render the game at lower level of detail (thus, faster) and then add detail with AI.

Nvidias’ new tech works by reading a game’s motion vectors and color data for each frame. Then, it runs that data through a large neural network that reconstructs the scene with new lighting, reworked materials, and finer character detail. Nvidia calls this “neural rendering,” and calls it the biggest GPU technology update the company has released in decades.

Nvidia’s pitch:

- Neural rendering instead of pure brute-force rendering

- AI-enhanced lighting, materials, and character detail

- A major leap comparable to ray tracing

Jensen Huang, Nvidia CEO, described it as something “we haven’t seen since the debut of ray tracing” and has repeatedly called it the GPT moment for graphics.

DLSS Controversy: The “AI Slop” Problem

The complaints started almost immediately after the first DLSS 5 footage was posted online. Characters in games like Starfield, Hogwarts Legacy, and Resident Evil Requiem looked wrong in so many different ways.

Skin was too smooth, pores were erased (or overemphasized), and expressions were distorted into something that looked more like an Instagram beauty filter than a real face. On top of that, you could easily spot AI artifacts in hair or around eyes, and the lighting seemed mostly random, despite Nvidia’s claims.

Thousands of players shared side-by-side comparisons showing characters that were stripped of the small details that artists had put there. AI was clearly overwriting creative decisions with its own averaged-out idea of what a face should look like.

What makes it worse is that Nvidia’s own showcase relied heavily on static shots and limited motion footage. This raised immediate questions about how the technology actually performs when characters move. Nvidia said it was generated using two (!) RTX 5090 GPUs – we can assume the performance is not too good.

Main criticisms so far:

- Waxy, overly smooth character faces

- Loss of artist-made details

- Visible AI artifacts around hair and eyes

- Lighting that looks unnatural or inconsistent

The Slippery Slope of Letting AI Drive

The optimistic take on DLSS 5 is that it’s a first-generation product, and first-generation products are always rough. Iteration happens, feedback gets incorporated, and the technology matures.

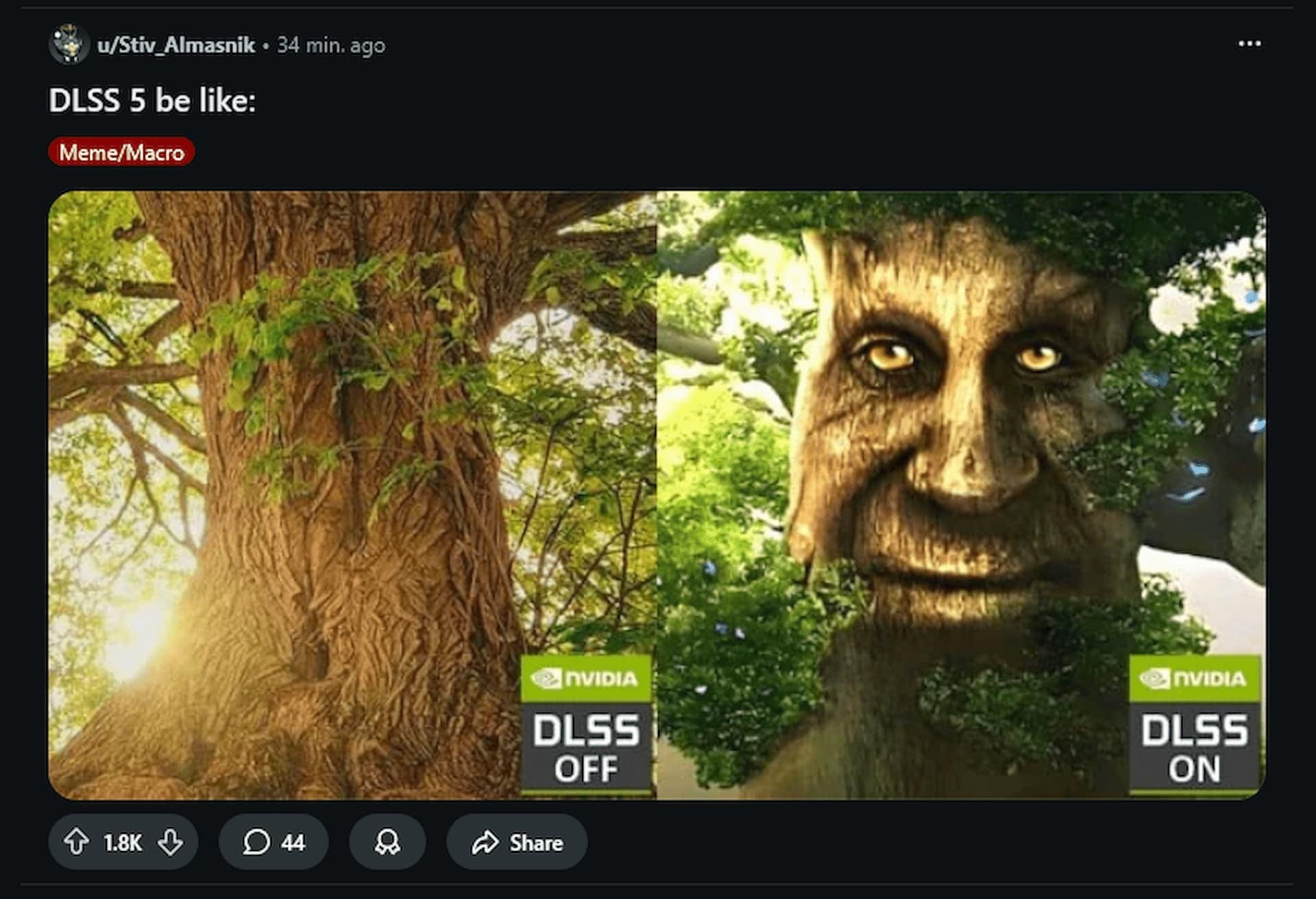

Nvidia showing gamers this and calling it the future:

NO DLSS vs DLSS 5 pic.twitter.com/Dq4g4b1EEt

— NikTek (@NikTek) March 16, 2026

That’s a reasonable position, but it skips over something important.

The problem with AI-generated faces is about creative ownership. AI models are built based on a specific set of data. This data then affects the results: if you build a model with red cars only, you cannot expect it to generate blue ones accurately.

So, it is hard to say if and how DLSS 5 will handle unique designs. Will it try to adapt, or will it default to the most “average” and “correct” look?

Developer control is what matters most here. Right now, it’s not clear how much of it actually exists. If studios can control exactly how aggressively DLSS 5 modifies characters, there’s a path forward. If they can’t, it’s going to be a much harder sell.

A Step Forward or a Step Too Far?

DLSS 5 is expected to launch later this year. Nvidia confirmed support across multiple upcoming and existing titles. The rollout will be a real test of how well it performs and what the real results are.

It’s one thing to react to early footage and demos. It’s another to have the technology sitting inside games you actually own, doing things to characters you’ve spent hours with. Now, we want to hear from you. Do you think AI-generated visuals are a worthy trade for better performance? Or did Nvidia cross the line with “AI slop” filter applied on top of your favorite characters? Let us know in the comments.